Adversarially Robust Learning via Entropic Regularization

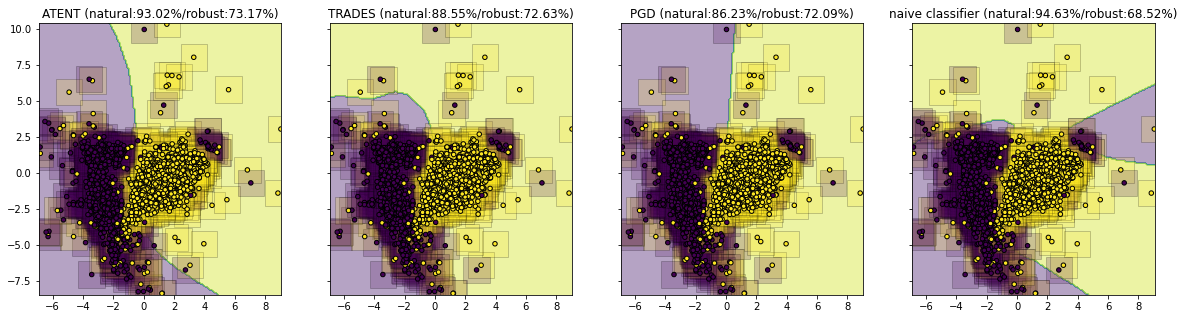

In this paper we propose a new family of algorithms, ATENT, for training adversarially robust deep neural networks. We formulate a new loss function that is equipped with an entropic regularization. Our loss considers the contribution of adversarial samples that are drawn from a specially designed distribution that assigns high probability to points with high loss and in the immediate neighborhood of training samples. ATENT achieves competitive (or better) performance in terms of robust classification accuracy as compared to several state-of-the-art robust learning approaches on benchmark datasets such as MNIST and CIFAR-10.

- G. Jagatap, A. Joshi, A. Chowdhury, S. Garg and C. Hegde, “Adversarially robust learning via entropic regularization”, ICML Workshop on Adversarial Machine Learning, 2021 and Frontiers on Artificial Intelligence, 2021. [ Preprint ]